We have all stood, at some point in our lives, in a long security line at an airport, waiting for our face to be matched to the photograph in our identification card. And inevitably, there are passengers slowing the line down because they are now wearing spectacles, have dyed their hair, or shaved off their beard since the photograph was taken.

This begs the question: how reliable is such a verification system? All it takes is one tired moment for one human being for a fraud to go undetected.

The fallibility of human nature in performing tedious tasks such as face recognition and identification could lead to disastrous consequences.

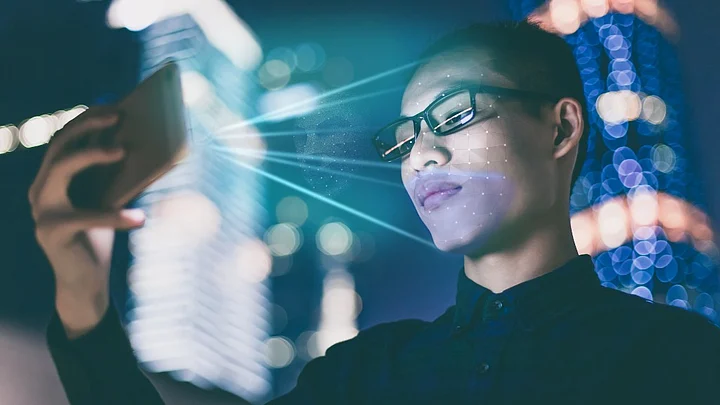

However, as in so many other fields, technology has certainly come to the rescue here too. Facial recognition systems have been in the works for a while now, along with other biometric systems such as fingerprint and iris authentication.

High accuracy of identification eliminating any human error or bias is the USP of such systems. We now see commercial deployments of facial recognition systems, be it at the Changi airport in Singapore for security, or paying for burgers with a smile while earning loyalty points at CaliBurger in California.

From the big five in the information technology industry, Facebook has adopted facial recognition technology to tag people in photos, whereas Apple’s iPhone X is using it as a security measure to unlock phones.

Particularly notable is the socially impactful device – Horus – that can be used by visually impaired people to recognize old friends using the latest technologies for face recognition.

Future Headed There

Facial detection – finding where the face lies in a photo – is usually done using standard image processing techniques. Facial recognition is the process of matching the face to an identity. At the heart of the facial recognition system is usually a deep learning solution.

Deep Learning comprises of a subset of methods of Machine Learning, which mimics the connections in the human brain in the form of an artificial neural network, to allow machines to learn without explicitly being programmed.

A large data set of faces is used to train the neural network which is then deployed for commercial usage. In the absence of a good data set for training, we can use pre-trained deep learning models which use the knowledge acquired from one domain of data applied successfully to another domain.

This new technology does well even with image transformations and minor modifications to the facial features. In fact, one can easily imagine its use in modeling variations of a given face, to identify criminals and terrorists on the run.

Not Without Pitfalls

However, none of the above applications is without faults. There were reports of the iPhone X being unlocked by the owner’s children who bore strong resemblance to their parents.

Online media seemed to cover more articles which detailed how to turn off Facebook photo tagging, rather than explain what the feature is supposed to do. Google face recognition blurs out one of Australia’s kitsch sights – the Big Prawn, a giant statue of a prawn – to protect the identity by blurring out the face (of a prawn!).

Large-scale deployments of facial recognition systems are still limited due to the costs involved in replacing existing systems as well as glitches that can be potentially dangerous, especially with security systems.

Let us also consider the privacy issues that are raised here. User privacy is of utmost importance; by collecting large databases of faces, we need to make sure that the people to whom these faces belong, are aware of and amenable to this usage.

For example, Illinois and Texas – two states in the USA – have enforced the Biometric Information Privacy Act to ensure judicious use of such biometric information. Courts in Illinois have ruled that face templates created from uploaded photos (which are then used for face recognition) are covered under the privacy act, and cannot be used without the explicit permission of the owner.

Also consider shops that are using face recognition technologies to identify potential shoplifters, celebrities, known miscreants, etc. This would qualify as a clear case of privacy intrusion. Gender and emotion identification systems are also being used in marketing campaigns for targeted marketing.

The industry does not appear to take concerns regarding personal identification very seriously. How do we maintain the balance on the fine line between technological advances and individual privacy?

Although significant strides have been made in the field of facial analysis, the technological best is yet to come. Sophisticated algorithms and larger data sets will only improve the accuracy of face recognition.

And yet, we need to examine whether such systems diminish the obscurity that each individual takes for granted in today’s society.

Widespread deployment of such technology and easy availability of databases that map our faces to our names, takes away from this obscurity. Although our faces are routinely seen in public and our names are used without restriction, being able to map faces to names in a heartbeat seems like an infringement of personal privacy.

Should we treat our faces as our fingerprints and signatures? That is a question that needs to be answered – legally and socially – before we see the proliferation of face recognition systems.

(The author has a doctorate in Distributed Systems from Yale and is a Data Scientist at Persistent Systems Limited.)

(The Quint is now on WhatsApp. To receive handpicked stories on topics you care about, subscribe to our WhatsApp services. Just go to TheQuint.com/WhatsApp and hit Send.)