Koo shot to prominence in February 2021 when a number of cabinet ministers and prominent political figures joined the app after Twitter refused to fully comply with MeitY’s orders to take down several accounts, stating that doing so would be “inconsistent with Indian law”.

Since then, India’s “homegrown Twitter” has gained a reputation as a sanctuary for right wingers, and a hotbed for anti-Muslim hate speech. Is Koo’s content moderation lax by design? Or is it a product of the kind of capacity problem that even giants like Facebook and Twitter have been struggling with for years?

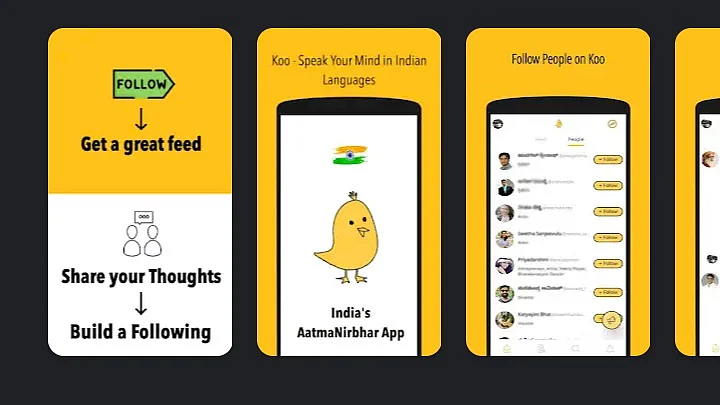

The Hitchhiker’s Guide to Koo

Koo’s Community Guidelines prohibit hate speech and discrimination:

“We do not allow any forms of hateful, personal attacks, ad hominem speech, or uncivil disagreement which are intended to harm another user or cause them mental stress or suffering. Examples of hateful or discriminatory speech include comments which encourage violence, racially or ethnically objectionable, or disparage anyone based on their national origin, sex, gender identity, sexual orientation, religious affiliation, disabilities, or diseases. We also prohibit content which could encourage other users to share such content.”

The platform also enables users to report Koos that violate its guidelines. It does not (as the author found after reporting a Koo) take down posts that are blatantly false or misleading, nor provide any indication on the process or expected timeline for a decision. This is likely a function of the fact that the app’s content moderation is entirely community-driven and manual: none of the mainstays of social media platforms—algorithms that automatically flag posts and restrict accounts—have made their way to Koo.

Koo’s Inability to Meet the Challenges of Content Moderation

Koo is not alone in the Indian social media app ecosystem in being unable to meet the challenges of content moderation. For instance, Chingari, one of the 24 companies along with Koo that won the Aatmanirbhar App Challenge, has been struggling to keep up with its growing user base.

Koo management’s response to criticism about its handling of content has been tepid at best. On 4 May 2021, co-founder Aprameya Radhakrishna announced that they would institute an oversight board, taking heavy inspiration from Facebook, to consult on specific cases and provide direction and or course correction for their content practices:

“Anything black and white and what we have learnt in the past, will be handled by the guidelines we have already published. If some case misses the guidelines, (where) we haven’t thought of the scenario, and we don’t know what the right stance is or what needs to be done, those cases will go to the advisory board.”

The move, while encouraging, is a band-aid on a bullet wound, particularly in the continued absence of publicly-available content moderation guidelines, lack of transparency reporting and understaffing.

Dog Whistles on Koo

In the curious case of Koo’s content moderation, it is also important to analyse what is unsaid but implied. The app has often drawn comparisons with US-based alt-tech micro-blogging site Parler, not just because the two came to prominence during the same timeframe, pitching themselves as havens of “free speech” and Atmanirbharta, but also because anti-minority hashtags, outlandish conspiracy theories and outright disinformation abound on both platforms.

Koo’s founders have also recently welcomed public figures spurned by Twitter to their platform to continue to peddle hate speech and violence “with pride”. The latest example is Kangana Ranaut.

Whether or not Koo—the company—explicitly endorses any of the harmful content on their platform, the fact remains that its user base is predominantly right of right wing. The echo chambers Koo creates—sealed in by poor enforcement of community guidelines and unfettered by a diversity of opinions and constructive criticism—may not just remain incidental to the platform, but become the business model itself.

Can ‘Technonationalism’ Shield Koo From Criticism?

This is, in a way, a perversion of technonationalism, the linking of technology with national identity, where the “local”, “self-sufficient” characterisation of a technology product may be used to shield it from criticism.

Whether Koo becomes India’s Twitter, its Parler or something completely new hinges on how it rises to the challenge of creating online spaces that foster free speech while ensuring it does not do so in a way that denigrates minorities and vulnerable communities.

(Trisha Ray is an Associate Fellow at ORF’s Technology and Media Initiative. She is also a member of the UNESCO’s Information Accessibility Working Group. She tweets at @trishbytes. This is an opinion piece and the views expressed above are the author’s own. The Quint neither endorses nor is responsible for the same.)