What India’s IT Rule Amendments Mean for Anyone Who Speaks Online

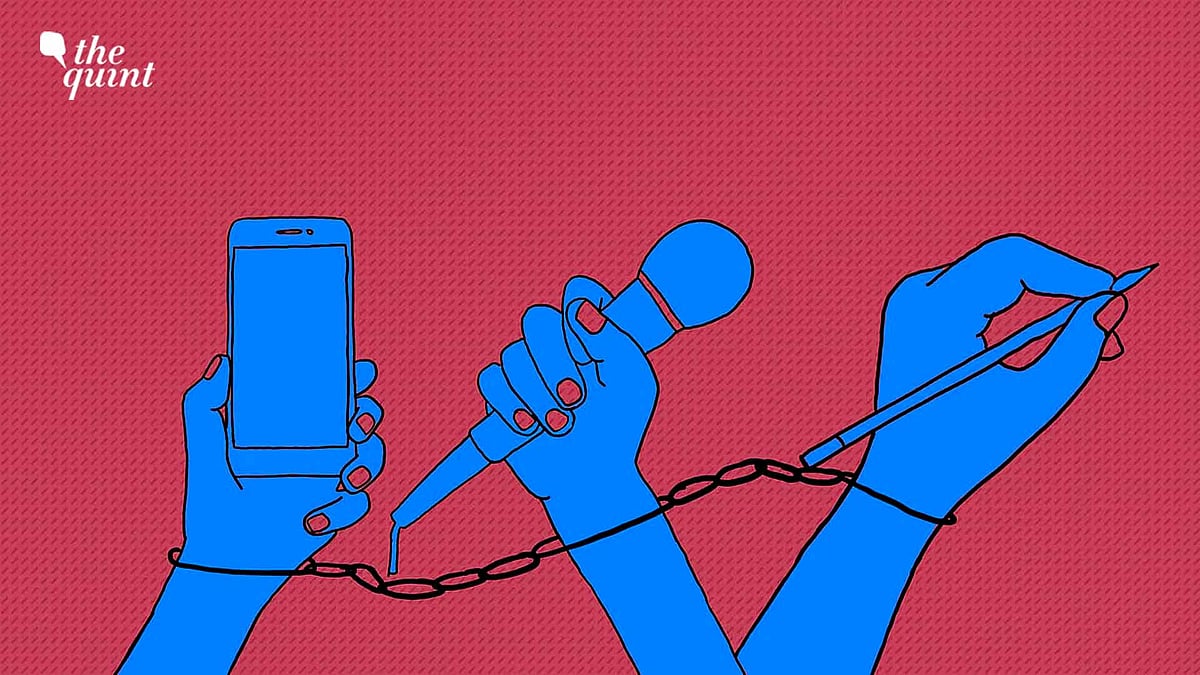

The law can fundamentally reshape how people speak, and are heard, online, writes DevRupa Rakshit.

advertisement

On 30 March, the Transgender Persons (Protection of Rights) Amendment Bill, 2026 received the President’s assent to become law, despite weeks of unrelenting protests led by the country’s trans community, who it claims to ‘protect.’

On the same day, the Ministry of Electronics and Information Technology (MeitY) proposed amendments to the IT Rules, 2021, with a 15-day window for public consultation. Fifteen days for changes that, if implemented, could significantly alter how online speech is governed in India.

Framed as “clarificatory and procedural”, the amendments—as the Internet Freedom Foundation (IFF) pointed out—instead mark a substantial expansion of executive power over digital content.

Who Gets to Speak, and at What Cost

That’s, perhaps, one of the reasons why the discourse around the proposed amendments has been muted.

But for journalists working in India today—especially those of us working independently, across formats and platforms—social media is one of the most important tools of distribution.

Which is also what makes the prospect of regulating it in the proposed ways… somewhat frightening. Dressed in the language of procedure, prima facie, the changes may seem technical. Their implications, though, are anything but.

The rational response, then, is over-compliance, and by extension, over-censorship. Similarly, the expansion of the Inter-Departmental Committee’s scope from hearing ‘complaints’ to examining vague ‘matters’ opens the door to discretionary scrutiny of content, including user-generated political speech.

And when intermediaries are made responsible for interpreting and acting on loosely defined categories, the safest interpretation becomes the narrowest one because the cost of getting it wrong is no longer abstract.

Surveillance and Control

Add to that the possibility of extended data retention requirements, and what begins to emerge is a framework for surveillance and control.

“I want to make content highlighting the government's negligence and malpractices. I want to question them and hold them accountable. I want to amplify ground reality using my platform,” says Archana Das, a content creator.

Citing examples of political dissenters like Sonam Wangchuk, who was imprisoned for nearly six months, and Umar Khalid, whose bail pleas have been rejected since he was imprisoned in September 2020, Das adds,

What emerges, then, isn’t just fear of violating any law per se, but simply being accused of doing so, which can draw one into processes that are punitive regardless of outcome.

Rastogi echoes this too, saying,

The Quiet Workings of Self-Censorship

The idea that the only thing keeping one’s work safe might be its relative obscurity becomes a working assumption because to grow is to be seen, to be seen is to be legible, and to be legible is to be acted upon. This doesn’t produce silence, at least not immmediately. What it produces, instead, is calibration.

“The only thing that has potentially kept my content under the radar is its limited reach and modest following. And, therefore, I have also avoided actively investing more time and effort in growing the reach of my content,” says Maansi Verma, lawyer and founder of the civic engagement initiative Maadhyam.

Over time, the recalibration may feel almost imperceptible—a caption softened here, a post abandoned there, a joke that feels easier to just not make—until the version of what one would have preferred to say, begins to feel increasingly out of reach.

In time, the trade-off Verma alluded to starts to reshape what growth even means since visibility, in this context, is as much an opportunity as it is exposure. And with the consequences it carries, the latter isn’t something everyone can afford.

But for creators whose work is political—especially those who sit outside or against dominant narratives—this isn’t entirely new.

It Doesn't Feel Personal, Until It Does

What can change, then, is less the existence of that risk, and more how it is distributed, normalised, and, crucially, automated. Like, what was once sporadic now feels more systemic.

But as one must, creators are figuring out how to adapt to this shift without necessarily conceding to it.

“Will this amendment affect my content? It’s designed to. That’s the point. But here’s the thing... I run Goa’s first woman-led independent digital news platform, and I didn’t start it because it was easy. I started it because someone had to do honest journalism without waiting for permission from Delhi, or from a businessman,” says Misbah Quadri, founder and editor of MQM24x7.

But the fact remains that all of this is still unfolding against a backdrop where attention itself is unstable. Over the past few years, public discourse has moved from one flashpoint to another—each urgent, each overwhelming, each demanding to be the centre of focus.

In 2026 alone, in addition to the world maybe-maybe not being on the brink of another war and LPG shortage that has resulted in, Indian news cycles have covered debates around caste-based reservation, the erasure of trans folks, state elections, and now, the amendment to the IT Rules.

Obviously, most of these are pertinent human rights concerns deserving of national attention and widespread agitation. But, together, their simultaneity makes it harder to hold on to any one shift long enough to even fully register what it changes, let alone realising the cumulative magnitude of the changes. And that’s probably by design.

The Cost of Being Seen

It’s also how this has always worked — as a series of targeted incursions, each affecting a group that can be isolated, debated, discredited, or simply ignored, until the circle of who is ‘at risk’ expands almost stealthily to include more and more of us, echoing what Martin Niemöller wrote decades ago in ‘First They Came.’

They come first for those who can be othered more easily because of existing socio-political biases, and because it doesn’t feel immediate or personal, it is easy to look away… until it isn’t.

And by the time the shift becomes visible — if it is ever allowed to become visible in ways that cannot be dismissed, that is — it has already settled in, as a state-enabled narrowing of the public sphere itself, where the terms of participation are no longer negotiated collectively but dictated through policy, platform compliance, and the threat of punitive process.

The Algorithm of Caution

And by rendering self-censorship inevitable, what these moments begin to reveal is how the language of protection can become a convenient alibi for expanding control — whether over the lives of trans people in law or over the terms of public expression online — until dissent itself begins to disappear, as it did in George Orwell’s 1984.

(DevRupa Rakshit is a queer, autistic individual, ARTivist and independent multimedia journalist based in Bangalore. This is an opinion piece. All views expressed are the author’s own. The Quint neither endorses nor is responsible for them.)