Generative AI Images Are Deceiving Everyone.

We Made a Cheatsheet To Debunk Them

Images created by Artificial Intelligence shouldn’t be intelligent enough to fool you into believing they are real. So, how do you tell AI images from real ones?

This multimedia immersive tells you how.

Despite the explosion of generative AI-powered art tools like Midjourney, Stable Diffusion, Open AI’s DALL-E, and others, artificial intelligence hasn't perfected making images just yet.

So, get ready to channel your inner Sherlock as we explore the most common telltale signs in AI-made images — so you can spot them better. We begin, with that cliché of fact-checking — TAKE A CLOSER LOOK.

THE FACE

Beauty lies in the eyes of beholder and so do the clues when it comes to AI-generated images.

Let's examine this AI-generated image of PM Modi, which was shared on social media as a photo of him at an event, wearing an extravagant attire.

- The colour of his eyes do not seem to match one another in this image.

- His glasses look like they are merging with his face.

Distorted faces in the background provide yet another hint that the image isn't a real one.

Out-of-context facial expressions, and overly textured or glistening skin are common indicators of AI-generated images.

This image went viral along with a few other similar ones, all claiming that they showed former United States President Donald Trump resisting arrest.

This image has a couple of glaring discrepancies.

Here, even though Trump's face is in focus, his hair appears to be blurred.

Plus, the face of the police officer highlighted here is entirely hazy!

SCIENTIFIC ERRORS

While most students can relate to not being able to understand some topics in the subject, AI art tools often generate images that lack a basic understanding of the laws of physics.

We asked a free AI art tool named BlueWillow to generate an image of Pope Francis carrying an umbrella and sitting on a beach.

Here's what it came up with.

Weirdly enough, the handle of the umbrella isn't anywhere close to the centre. Pope Francis can be seen holding the handle to one side of the umbrella.

However, the umbrella is NOT lopsided and is in its desired position. Basically, one look at the handle and you know that there's something definitely off about the image!

Remember what we mentioned in the previous examples on Modi and Trump? Look closely at the face, always!

This image of the Pope has an eerie-looking darkened area around his eyes and nose, which hints at the image not being a real photograph.

OBJECTS OR ACCESSORIES

Don't be surprised if you come across an image where there is something wrong with inanimate objects. This is because AI art tools often do not understand the structure and functionality of some objects.

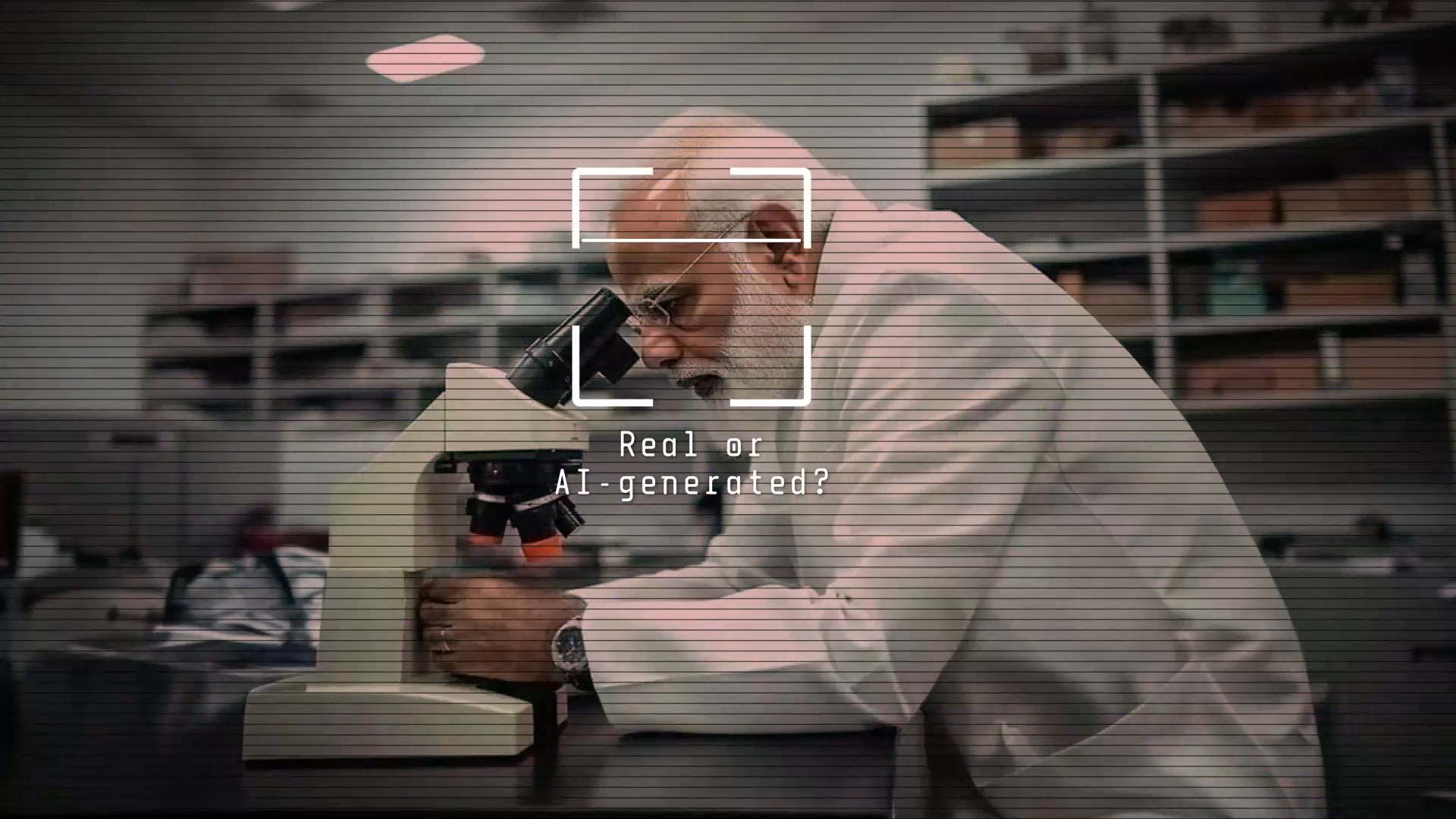

This image of PM Modi shows him looking into a microscope, and was shared across social media platforms.

Except, if you look carefully, you'll see that Modi's eyes aren't looking into the lens of the microscope — his forehead is!

Upon checking the original post which shared this image, we saw that the uploader had credited #aiart and #midjourney in the caption, and our doubts about the authenticity of the image were confirmed.

The AI tool used to create this image clearly made an error in accurately depicting how a human being would use a microscope.

A perfect example of why you should focus on objects and accessories in an image during your AI-detection.

These images below were posted on Twitter and were generated using Midjourney.

In this image, you can see that one pedal of the unicycle seems to be missing, and the rider's leg is hanging in the air!

This image shows the pedals of the unicycle ahead of the tire instead of being on its sides, which is not how a unicycle works!

MEANINGLESS LETTERS

AI tools don't seem to be well-equipped to depict texts on objects and accessories.

While it is possible for the tools to generate meaningful texts occasionally, they often give results where the letters in the image do not make a proper word.

Don’t believe us? Look at another viral AI image that was claimed to depict Trump’s arrest.

A closer look will show letters written on the badges and caps of the police officers.

The letters shown here don't even make a proper word!

Here's another example. On asking the AI art tool BlueWillow to generate an image which shows a man holding a signboard, this is what the tool came up with.

Once again, do these words make sense to you or to any of us? Nope!

THE HANDS

In several AI-generated images, the misshapen structure of a person's hand in the image indicates that the photo was generated using AI tools and isn't a real one.

Look at these two pictures which went viral with a claim that Anthony Fauci, former Chief Medical Advisor to the President of the United States, was arrested by the police.

However, the discrepancies in the images were visible when you looked closely at the hands.

In this image, one of the policemen can be seen as having a fifth finger instead of a thumb!

And it gets weirder here. The right hand of this policewoman seems to be attached to her left arm! Once you spot it, there is no unseeing this...

LOOK FOR WATERMARKS

Some AI tools such as DALL-E add watermarks on images that they generate.

The bottom-right part of images created by DALL-E contain a multicolour swatch that is meant to indicate that the image was generated using the AI tool.

But here is the concerning part. Not all tools have watermarks. Moreover, the images can be easily cropped to exclude the watermark.

So, watch out for watermarks, but just because an image doesn't have one doesn't mean it's a genuine photograph!

Now, it's time to put your AI-detection knowledge to the test!

What do you do when none of these tips work? Sometimes, AI creates extremely realistic images without any 'tells'. That's when a simple search can help.

Reverse Image Search to the Rescue

A reverse image search on the viral picture can often lead to the original source. A search can open up a lot of possibilities.

It might lead you a sharper or a better version of the image which can further be used to search for the source.

It can also direct you to similar images available on the internet.

It can also help in finding older posts carrying the same image and news reports (if any).

The search can be performed using Google Lens or can also be done using InVID WeVerify, a Google Chrome extension.

Watch the video below where we explain in detail how to perform a reverse image search.

Look for News Reports

One should also rely on credible news sources and media reports to verify images going viral on social media platforms.

It should be noted that important events don't happen in isolation and if an image is claimed to be from such an event, then there would almost invariably be news reports around the same.

For example, this image was shared with a false claim that Russian President Vladimir Putin suffered a heart attack.

When we looked for news reports for the same, we did not come across any.

A head of state suffering a heart attack and the incident receiving no media coverage is extremely unlikely, right? Yep, and the images of Putin you see above? They were generated using AI.

Can AI tools be used to counter the disinformation menace created by AI images? Several AI tools such as Hugging Face, Optic AI or Not, and Illuminarty have been developed to detect images generated using AI.

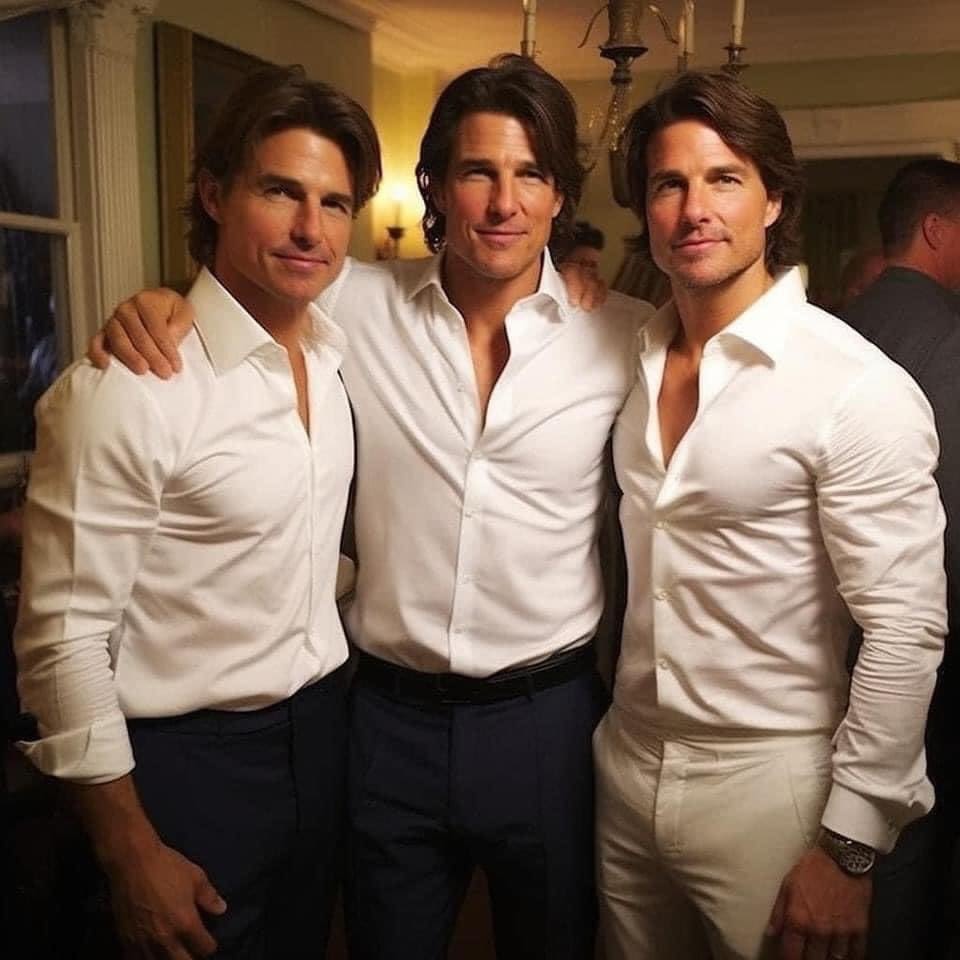

For example, The Quint’s WebQoof team debunked this image which was being shared as a photo of Hollywood actor Tom Cruise purportedly with his “stunt doubles,” ahead of the release of his film 'Mission: Impossible – Dead Reckoning Part One.'

The creator of this image, Ong Hui Woo, confirmed that he had made this image using the AI art tool Midjourney.

We used this image to check how well the AI-detector tools work in identifying AI-generated content.

Hugging Face pegged the chances of the photo being taken by a human at 87 percent, Illuminarty said that there was a 90.1 percent chance that the image was AI-generated, while Optic AI or Not was certain it was made by AI.

We used several other test images to see which of the AI-detection tools worked most accurately. Like this photo of a supposed explosion at the Pentagon, which had gone viral recently and caused a dip in America's financial markets.

The image had turned out to be AI-generated.

While in most cases, Optic AI or Not seemed to do the best job at identifying which images were created by AI, the image of the supposed Pentagon explosion left all three AI-detection tools stumped.

These results do not paint a great picture. It shows that these identifier tools are still developing and will take more time to improve their accuracy in identifying AI-generated images.

The severity of the disinformation problem caused by AI images cannot be underestimated. Here's an example to show how quickly AI-generated images are being used to spread false narratives.

On 28 May 2023, it took an unknown individual just a few seconds to use an Artificial Intelligence (AI) tool to morph a photograph of wrestlers Vinesh and Sangeeta Phogat.

The actual photo showed them seated inside a police bus, being detained by the Delhi Police.

The AI-altered image showed them in the same position, in the same bus. The only difference? They were shown to be smiling instead.

"Sadak par drama karne ke baad yeh inka asli chehra (After their drama on the streets, here are their real expressions)." Messages such as this one were shared along with the fake photograph, and the image went viral. In minutes.

The Quint’s Webqoof team got to work, and we soon demonstrated that the image was edited and not a real photograph. Here's how.

But, as fact-checkers, we realised once again the enormity of the challenge in front of us.

AI-generated images and AI-created disinformation are here to stay, and they will evolve and upgrade rapidly as well.

But we will still be here, finding newer and improved ways to debunk them, and helping you do the same.

So, if you've got a doubt about an image and can't tell whether it's real or not, send it to us at Webqoof@thequint.com or WhatsApp us on +91 96436 51818.

And as that legendary old line goes, "Satark raho!"

This multimedia immersive is Part Two of our project on AI's Disinformation Problem.

Read Part One here, on whether AI-powered chatbots will make an already bad misinformation problem worse.

The third part of this project is coming up very soon. Part Three will focus on the problems of media misreporting on AI-made content, and the measures that tech platforms and governments could take to tackle the menace of AI-aided disinformation. Stay tuned!

CREDITS

REPORTER

Abhishek Anand

REPORTER

Aishwarya Varma

CREATIVE PRODUCER

Naman Shah

GRAPHIC DESIGNER

Kamran Akhter

GRAPHIC DESIGNER

Aroop Mishra

SENIOR EDITOR

Abhilash Mallick

CREATIVE DIRECTOR

Meghnad Bose

Dear reader,

Journalistic projects as detailed and comprehensive as this one take a lot of time, effort and resources. Which is why we need your support to keep our independent journalism going. Click here to consider becoming a member of The Quint, and for more such investigative stories, do stay tuned to The Quint's Special Projects.