Imagine a situation where a week before an Assembly election, a deepfake video of Bahujan Samaj Party (BSP) supremo Mayawati announcing that she has extended her support to the Bharatiya Janata Party (BJP) goes viral. Two days later, another edited video of her announcing her party's alliance with the Congress is shared.

These are no longer scenes from a dystopian future but deepfake videos The Quint's WebQoof team has debunked in the run-up to the 2023 state elections in Madhya Pradesh.

As we see rapid advancements in generative AI content, tech experts and political strategists believe that deepfakes are bound to have an impact on politics. While on one hand they could be used to target opponents by creating deepfakes of them saying inappropriate things, on the other hand, they could be used to create disinformation and confuse the electorate.

Spread Disinformation, Form Narratives: How Deepfakes Could Impact Elections

1. How Generative Media Will Impact Politics?

To understand how deepfakes and generative AI content might help a politician over the course of the elections, The Quint reached out to Samarth Saran, co-founder of a political consultancy firm.

"Generative AI and deepfakes have the potential to create favourable and appealing portrayals of politicians, amplifying their appeal. For example, they can craft a deepfake showcasing a politician delivering an inspiring speech or appearing to assist someone, even if it didn't happen. Similarly, fake content tailored to appeal to a politician's constituents can be generated."

Samarth Saran, co-founder of a political consultancy firmThink tank DeepStrat CEO Saikat Dutta told The Quint that he does not believe that these deepfakes and generative content will significantly improve the popularity of politicians.

"There is a confirmation bias on which disinformation plays out. So it will work more from a negative perspective to decrease the popularity of politicians. It will be used to create disinformation and confusion among voters."

Saikat Dutta, CEO of DeepStratA recent New York Times report found that Argentina became the first election impacted by AI. It said that the two contesting candidates and their supporters adopted technology to alter existing images and videos and even create some from the scratch.

The use of deepfakes and generative AI in election campaigns raises a huge concern for India, especially ahead of the 2024 Lok Sabha elections. Not only can it be used to depict incidents that didn't happen, but it can also be used to frame a political opponent.

While talking about how AI's impact and its use to form narratives on social media platforms, Saran mentioned how political parties have employed half-truths or edited videos in the past to create false narratives about their opponents.

He further said, "Now, imagine possessing technology and resources that enable the creation of videos depicting your opponent making anti-religious or anti-national remarks or a fabricated video where your opponent concedes defeat. By the time action is taken against these fake videos, they could have gone viral, causing significant damage."

Expand2. The War of Narratives: Who Will Win?

The fear of edited or altered videos targeting politicians or furthering narratives was realised when five Indian states witnessed assembly elections recently.

For instance, a video from Kaun Banega Crorepati (KBC), where the host Amitabh Bachchan could be purportedly asking the contestant a question about Congress leader Kamal Nath went viral.

The clip showed Bachchan asking, "How many farmers' debts did the Kamal Nath-led government, which was formed in 2018, waive?"

However, the video turned out to be altered. But, here's the twist. The video was edited in such a manner that it seemed like Bachchan was actually asking the question. You may ask how? This is where the voice cloning applications come in.

In the past few months, the internet has been flooded with clips generated with the help of voice cloning applications. Videos of PM Modi and footballer Cristiano Ronaldo singing different songs are just a few popular examples. The Quint, too, tried generating such kinds of videos using free online applications and was able to produce them in a few minutes.

Edited videos of politicians: The two videos of Mayawati that went viral on were also edited to add the respective audios.

Look how both these videos were used to create a narrative that the leader urged people to vote for a particular party during the polls.

While the first person claimed that a BSP candidate has "surrendered", the other user said that Mayawati asked people to vote for BJP for the "upliftment of Dalit/exploited/deprived class."

Expand3. Increase in Mis/Disinformation: A Setback for Fact-Checkers?

According to an essay series published on the Observer Research Foundation (ORF), "recent technological advancements in AI, such as deep fakes and language model-based chatbots like ChatGPT, have helped amplify disinformation."

The essay further added that the tools have become accessible to common users which, in turn, has enabled conspiracy theorists and bad actors to spread disinformation quickly and at a lesser cost.

Dutta believes that the disinformation on social media platforms will increase exponentially, thanks to generative AI tools. He said, "Today, disinformation campaigns are emboldened because their cost of production has gone down significantly."

The earliest instance of the use of deepfake during elections dates back to 2020, when one such video of the BJP's Manoj Tiwari went viral ahead of the Assembly elections in Delhi.

According to a report in MIT Technology review, the leader used a deepfake for campaigning purposes and was seen speaking in Haryanvi and English. The BJP had partnered with The Ideaz Factory, a political communications firm, to create deepfakes to target voters speaking different languages in India.

But why is it a bigger issue today? The answer is simple. The technological revolution has brought highly sophisticated tools to common people at a small or almost zero cost. This has significantly brought down the cost of generating deepfakes, making it easier for people to spread disinformation.

Expand4. How To Escape the Spiral of Deepfakes?

The recent debate around AI was trigged when a deepfake of actor Rashmika Mandanna went viral on social media platforms. While several people pointed out that the video was a deepfake, other users believed it to be real.

The Quint published a video pointing out the discrepancies that we noticed while observing the video carefully. You can view the video below.

Some common giveaways: The lip movement and the audio will not be properly synchronised.

Look for unnatural eye movement in the video. For example, if the person does not seem to blink normally or there is a lack of eye movement, then chances are that the video is not real.

Check for blurry backgrounds or inconsistent lighting.

Look for body positioning. In the case of Mandanna's video, while her face appeared straight, her body seemed in motion and was blurry. This gave us a hint that the video was indeed a deepfake.

The effectiveness of AI detection tools: There are several available online tools that can help in detecting AI-generated content, such as deepware.ai, play.ht, and ElevenLabs.

However, these tools are still developing and do not give accurate results every time.

When we passed Kamal Nath's altered video through Deepware, we received four results. While the first two results showed no deepfake detected, the other two revealed that the video was indeed edited.

Expand

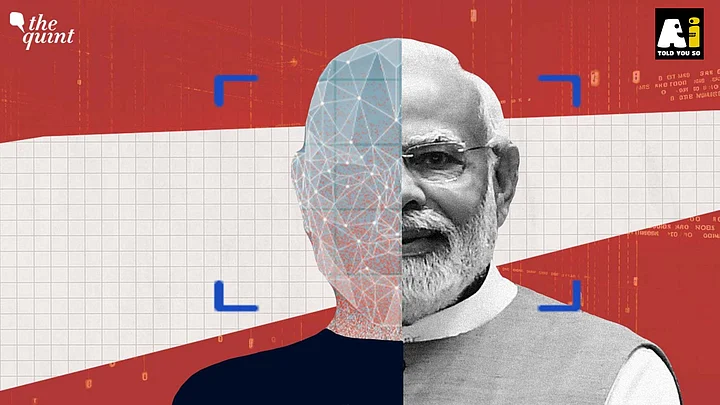

The possible misuse of deepfake technology was also raised by Prime Minister Narendra Modi, who, while addressing a gathering at the BJP headquarters in New Delhi on 17 November, said, "A new crisis is emerging due to deepfakes produced through AI."

After PM Modi expressed concerns, the Union government has said that it would soon introduce regulations to tackle the spread of deepfakes on social media platforms.

But what will be the impact of deepfakes in the recent Assembly elections? How would generative AI help form narratives on the internet? What are the possible giveaways while dealing with deepfakes? The Quint explains.

This article is a part of 'AI Told You So', a special series by The Quint that explores how Artificial Intelligence is changing our present and how it stands to shape our future. Click here to view the full collection of stories in the series.

How Generative Media Will Impact Politics?

To understand how deepfakes and generative AI content might help a politician over the course of the elections, The Quint reached out to Samarth Saran, co-founder of a political consultancy firm.

"Generative AI and deepfakes have the potential to create favourable and appealing portrayals of politicians, amplifying their appeal. For example, they can craft a deepfake showcasing a politician delivering an inspiring speech or appearing to assist someone, even if it didn't happen. Similarly, fake content tailored to appeal to a politician's constituents can be generated."Samarth Saran, co-founder of a political consultancy firm

Think tank DeepStrat CEO Saikat Dutta told The Quint that he does not believe that these deepfakes and generative content will significantly improve the popularity of politicians.

"There is a confirmation bias on which disinformation plays out. So it will work more from a negative perspective to decrease the popularity of politicians. It will be used to create disinformation and confusion among voters."Saikat Dutta, CEO of DeepStrat

A recent New York Times report found that Argentina became the first election impacted by AI. It said that the two contesting candidates and their supporters adopted technology to alter existing images and videos and even create some from the scratch.

The use of deepfakes and generative AI in election campaigns raises a huge concern for India, especially ahead of the 2024 Lok Sabha elections. Not only can it be used to depict incidents that didn't happen, but it can also be used to frame a political opponent.

While talking about how AI's impact and its use to form narratives on social media platforms, Saran mentioned how political parties have employed half-truths or edited videos in the past to create false narratives about their opponents.

He further said, "Now, imagine possessing technology and resources that enable the creation of videos depicting your opponent making anti-religious or anti-national remarks or a fabricated video where your opponent concedes defeat. By the time action is taken against these fake videos, they could have gone viral, causing significant damage."

The War of Narratives: Who Will Win?

The fear of edited or altered videos targeting politicians or furthering narratives was realised when five Indian states witnessed assembly elections recently.

For instance, a video from Kaun Banega Crorepati (KBC), where the host Amitabh Bachchan could be purportedly asking the contestant a question about Congress leader Kamal Nath went viral.

The clip showed Bachchan asking, "How many farmers' debts did the Kamal Nath-led government, which was formed in 2018, waive?"

However, the video turned out to be altered. But, here's the twist. The video was edited in such a manner that it seemed like Bachchan was actually asking the question. You may ask how? This is where the voice cloning applications come in.

In the past few months, the internet has been flooded with clips generated with the help of voice cloning applications. Videos of PM Modi and footballer Cristiano Ronaldo singing different songs are just a few popular examples. The Quint, too, tried generating such kinds of videos using free online applications and was able to produce them in a few minutes.

Edited videos of politicians: The two videos of Mayawati that went viral on were also edited to add the respective audios.

Look how both these videos were used to create a narrative that the leader urged people to vote for a particular party during the polls.

While the first person claimed that a BSP candidate has "surrendered", the other user said that Mayawati asked people to vote for BJP for the "upliftment of Dalit/exploited/deprived class."

An altered video of Congress leader Kamal Nath, too, went viral claiming that it showed him promising people that his party would reconsider the abrogation of Article 370, if voted in power.

A fact-check by AltNews revealed that the original video dated back to 2018.

Political parties joining the fray: Recently, the official X handle of Telangana Congress shared a video which showed PM Modi controlling Telangana Chief Minister K Chandrashekar Rao and Asaduddin Owaisi with the help of threads.

The video also carried a song in the voice of PM Modi, which was generated using a voice cloning application.

The official handle of Congress, too, shared a video with background music in PM Modi's voice. It took a dig at the prime minister for attending the 2023 World Cup final between India and Australia.

It's true that these videos were not sophisticated and were meant for targeting politicians. However, the comments and reactions to the videos show that not all people on the internet can differentiate between real and fake videos. Moreover, with the advancement of technology, deepfakes could easily be generated and facilitate the spread of disinformation.

Don't believe us? Look at these examples. A video of model Bella Hadid and the other of Queen Rania of Jordan went viral on the internet claiming that both of them were supporting Israel in its ongoing conflict with Hamas. However, as it turned out, both clips were edited and deepfakes.

The video of Hadid had garnered a massive viewership of more than 26 million.

Increase in Mis/Disinformation: A Setback for Fact-Checkers?

According to an essay series published on the Observer Research Foundation (ORF), "recent technological advancements in AI, such as deep fakes and language model-based chatbots like ChatGPT, have helped amplify disinformation."

The essay further added that the tools have become accessible to common users which, in turn, has enabled conspiracy theorists and bad actors to spread disinformation quickly and at a lesser cost.

Dutta believes that the disinformation on social media platforms will increase exponentially, thanks to generative AI tools. He said, "Today, disinformation campaigns are emboldened because their cost of production has gone down significantly."

The earliest instance of the use of deepfake during elections dates back to 2020, when one such video of the BJP's Manoj Tiwari went viral ahead of the Assembly elections in Delhi.

According to a report in MIT Technology review, the leader used a deepfake for campaigning purposes and was seen speaking in Haryanvi and English. The BJP had partnered with The Ideaz Factory, a political communications firm, to create deepfakes to target voters speaking different languages in India.

But why is it a bigger issue today? The answer is simple. The technological revolution has brought highly sophisticated tools to common people at a small or almost zero cost. This has significantly brought down the cost of generating deepfakes, making it easier for people to spread disinformation.

How To Escape the Spiral of Deepfakes?

The recent debate around AI was trigged when a deepfake of actor Rashmika Mandanna went viral on social media platforms. While several people pointed out that the video was a deepfake, other users believed it to be real.

The Quint published a video pointing out the discrepancies that we noticed while observing the video carefully. You can view the video below.

Some common giveaways: The lip movement and the audio will not be properly synchronised.

Look for unnatural eye movement in the video. For example, if the person does not seem to blink normally or there is a lack of eye movement, then chances are that the video is not real.

Check for blurry backgrounds or inconsistent lighting.

Look for body positioning. In the case of Mandanna's video, while her face appeared straight, her body seemed in motion and was blurry. This gave us a hint that the video was indeed a deepfake.

The effectiveness of AI detection tools: There are several available online tools that can help in detecting AI-generated content, such as deepware.ai, play.ht, and ElevenLabs.

However, these tools are still developing and do not give accurate results every time.

When we passed Kamal Nath's altered video through Deepware, we received four results. While the first two results showed no deepfake detected, the other two revealed that the video was indeed edited.

Reverse image search: A simple reverse image search can prove to be extremely helpful while checking a deepfake. It can either lead you to the original video, which has been edited to add the audio, or it could lead you to credible news reports.

People should also reply on credible news reports about recent incidents to prevent falling for deepfakes.

Check our video below to find out how to perform a reverse image search.

The government is planning on introducing regulations for deepfakes. However, with the general elections around the corner, the proliferation of deepfakes and generative AI content raises concerns if regulations will be able to keep up with them.

(Not convinced of a post or information you came across online and want it verified? Send us the details on WhatsApp at 9540511818, or e-mail it to us at webqoof@thequint.com and we'll fact-check it for you. You can also read all our fact-checked stories here.)